The means and inclination to test SEO changes is growing within the industry (finally), but a willingness to test does not always mean it will be successful and worth doing.

Not everything is worth testing, and not everything is easily testable. So what follows are some of my lessons/examples from testing SEO changes (split testing or otherwise).

What to Test

How do we decide what to test? Selecting the right test is not always as simple as simply “which will have the biggest impact” here are some considerations when choosing a test:

- Speed of change—How quick/easy is it to make the change? Even if you can modify the page with test tooling, do you have what you need (logic/information) to make the change?

- Who else within the business needs to sign it off? If you are significantly impacting the experience of users and will need the attention from other teams—UX/Ecommerce teams, for example—you seldom can run these tests without getting buy-in first.

- Avoid clashing with UX testing—If other “conventional” UX tests are being run, you need to ensure that you don’t make SEO changes while another testing is happening. The two tests may clash and rule out any validity in the results.

- The impact of the change—Do you think the test is worth running? Sometimes, the lightweight tests aren’t worth testing.

- Is Google likely to crawl/index the page?—If you have a large/complicated site or JavaScript is required to render key content, it may take Google too long to index the test bucket. You want your test to be complete in 4-6 weeks (max). If Google doesn’t pick up the changes within two weeks, it may invalidate the results.

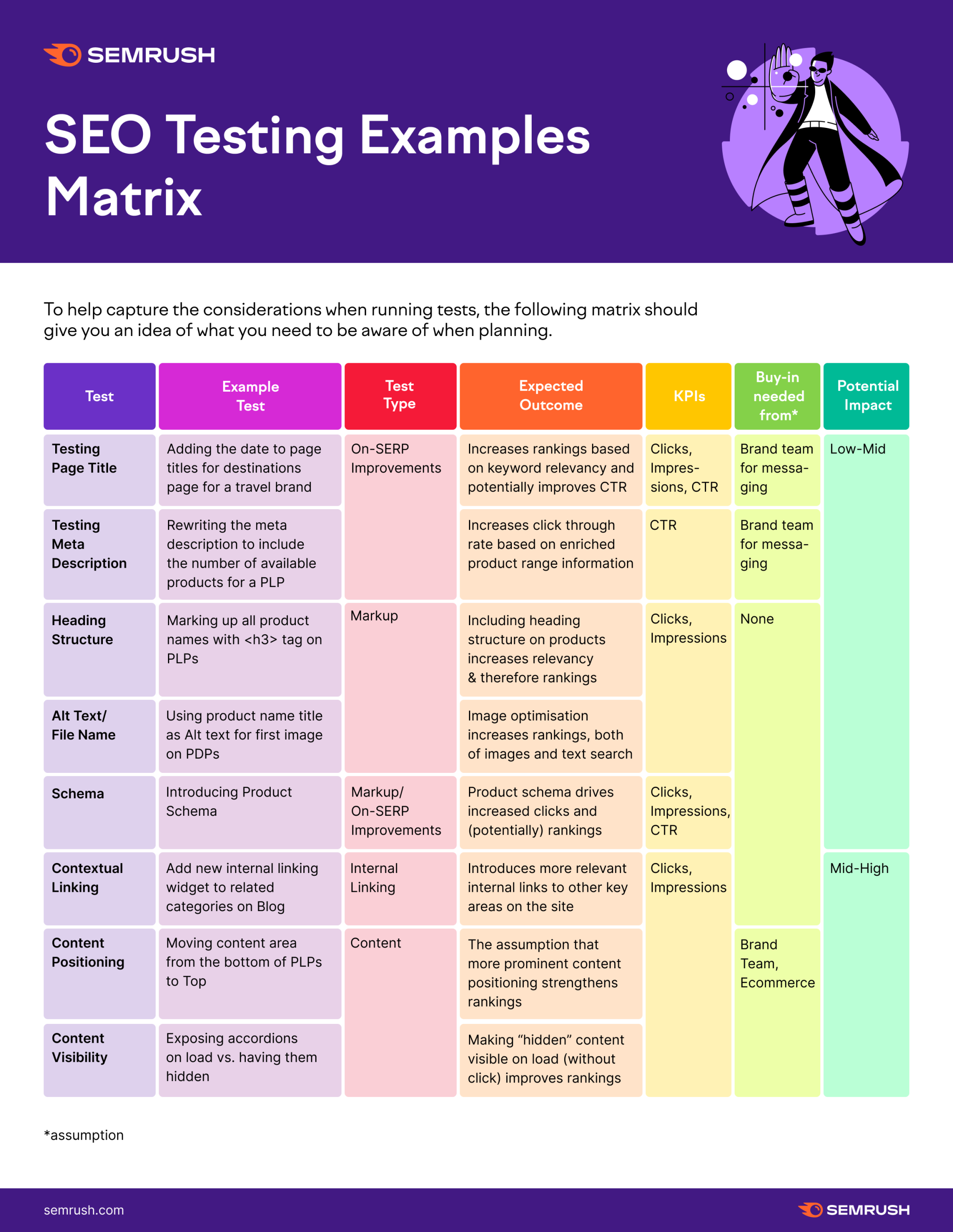

With the above in mind, let’s move on to the examples.

This list isn’t exhaustive, the brilliant thing about testing is every website, niche, and tech setup will provide opportunities to test in ways that will surprise and shock you.

*The team required to make the technical change is an assumption we’ll have for everything here.

Insights on Different SEO Change/Test Types

Tests and changes will always deliver different results depending on the site and circumstances around the test itself. In some situations, what benefits one site may actually detriment another. This means applying learnings from some tests to other sites is not always wise.

Even with this in mind, some aspects of testing and the experience of testing are worth sharing, even if it helps develop your own thinking and approach for doing this yourself.

Title/Meta Descriptions Can be Fertile Ground

If your titles/descriptions aren’t especially good, but you don’t have the resources to change them all, a split test is a great opportunity here.

This is a relatively quick and easy test to apply (with the right logic available), and it is easy to see on the SERPs if Google has “seen” the test.

Trying to Change CTR Can Be Frustrating

Changing CTR can be challenging because you assume it will deliver a net benefit to the user’s experience on the SERP. As you often make these changes at scale across the test group, some may see improvements, but others may worsen things.

A classic example is adding review ratings via schema, or a title change can display some products/services which people rate well. Still, anything with a poor review will also get greater attention. Putting bad reviews in SERPs doesn’t often help clicks!

Mark-Up Changes Can Be Quick & Easy

It can be relatively easy to make mark-up changes. This can be very useful to justify making changes to page templates to roll out “best practices” in headings or Schema. Maybe it can be used to help justify an altogether more “radical” change without fully rolling it out.

While a markup change can be a good test, the results aren’t always significant.

Small changes often equal minor improvements, so you’ll need to factor this into your decision-making.

You Can Hypothesize Losses too

Depending on the testing circumstances, it is possible to predict a decline in performance based on change. If another team wants to make a change, you’re not confident with it. You can insist on testing to validate a hypothesis that it is a bad idea.

Testing Site-Wide Elements

Testing site-wide elements (for example, main menu or footer) is highly problematic as an A/B Test. We need to ensure we are serving Google two roughly-equal page groups (in traffic size & user behavior) as a test & control—but this isn’t possible with a persistent element. A test where the menu is only different in some cases, and not others will be bad for search engines and users.

The primary workaround here would be to do a time-based test in this instance—so make the change and then run causal impact analysis on the data after. You lose the advantages of an A/B test here—but the alternatives aren’t strong enough to provide trustworthy conclusions.

Time to Get Testing

Once you have a strong hypothesis, buy-in from the appropriate stakeholders, the ability to test/measure the results, and the means to serve the test itself (if you’re A/B testing), you are in the prime position to get some great insights and data. You can always start testing with SplitSignal, a Semrush tool for SEO split testing.